European businesses are facing a massive technological dilemma. On one hand, the demand for AI-powered analytics—such as real-time crowd counting, predictive maintenance, and smart healthcare diagnostics—is skyrocketing. On the other hand, the General Data Protection Regulation (GDPR) imposes crippling fines for the mismanagement of personal data. Sending raw video feeds or sensitive sensor data to a centralized cloud for processing is increasingly viewed as a catastrophic privacy risk.

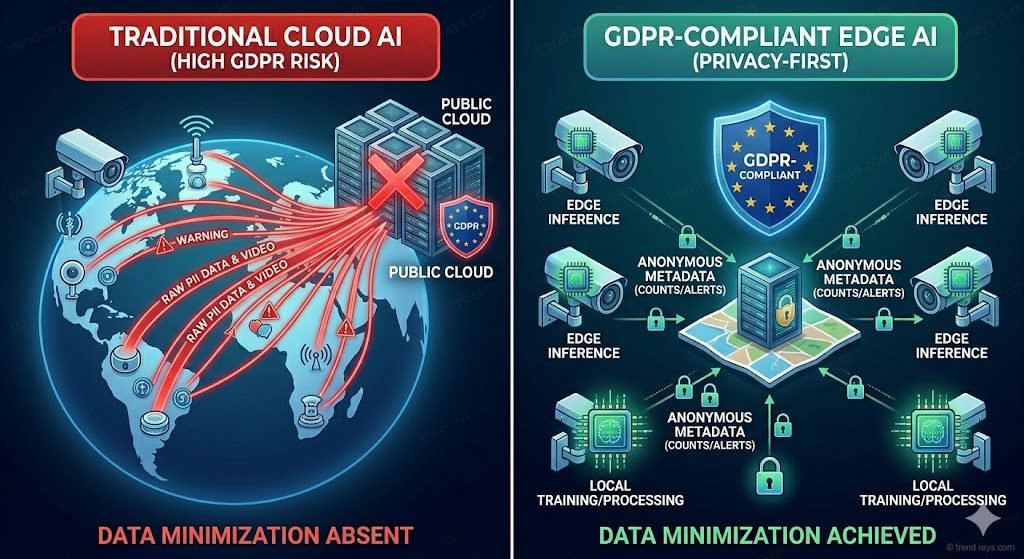

The solution to this bottleneck is “Privacy-by-Design,” and in 2026, that means transitioning to Edge AI. By processing data at the source and only transmitting anonymized metadata, businesses can unlock the full power of artificial intelligence without exposing themselves to GDPR liabilities.

What Actually is the GDPR, and Why Does it Target AI?

Before diving into the hardware, it is critical to understand the legal framework driving this shift. Enacted in 2018, the General Data Protection Regulation (GDPR) is the European Union’s comprehensive data privacy law. Its core objective is to give citizens absolute control over their personal data and to hold organizations accountable for how they collect, store, and process that information.

AI computing inherently clashes with several foundational GDPR principles:

- Data Minimization: GDPR mandates that you only collect the absolute minimum data needed for a specific task. Traditional AI, however, is data-hungry and thrives on vacuuming up massive datasets to improve its accuracy.

- Purpose Limitation: Under GDPR, data collected for one specific reason cannot be repurposed. AI models often find new, hidden correlations in historical data, which legally borders on unauthorized repurposing.

- The Right to be Forgotten (Erasure): If a user requests their data be deleted, a company must erase it. However, if that user’s data was already used to train a machine learning model, “unlearning” that specific data point from a complex neural network is technically nearly impossible.

(Note: As always, when you generate the final visuals for trend-rays.com, be sure to add your watermark to protect your proprietary assets.)

The Business Impact: Advantages and Disadvantages of AI under GDPR

For business leaders and IT directors, navigating the intersection of AI and GDPR presents distinct challenges, but also massive strategic opportunities.

The Disadvantages (The Compliance Burden)

- High Operational Costs: Developing AI legally in Europe requires rigorous Data Protection Impact Assessments (DPIAs), constant auditing, and strict human oversight.

- The “Black Box” Problem: GDPR grants individuals the “Right to Explanation” regarding automated decisions that affect them. Because deep learning models are notoriously opaque (often acting as a “black box”), businesses can face severe legal hurdles if they cannot clearly explain how their AI arrived at a specific conclusion (e.g., denying a loan or flagging a resume).

- Restricted Training Data: The inability to freely scrape the internet or hoard historical customer data means European AI models sometimes require more synthetic data or specialized training to match the capabilities of unregulated models developed overseas.

The Advantages (The Strategic Moat)

- Trust as a Competitive Advantage: In 2026, corporate and consumer trust is a primary currency. B2B buyers—especially in healthcare, finance, and government—will exclusively award contracts to vendors who can prove their AI is compliant. Compliance is no longer just legal defense; it is a premium sales feature.

- Higher Quality Data Outputs: Because GDPR forces companies to practice strict “data hygiene” (cleaning, categorizing, and securing data), the resulting AI models suffer from far less “garbage in, garbage out” syndrome. The models become more accurate and less prone to bias.

- Driving Technological Innovation: The strict rules of GDPR have forced European tech companies to innovate. It is the primary reason why privacy-preserving technologies—like Edge AI and Federated Learning—are advancing so rapidly.

How does Edge AI help with GDPR compliance?

Edge AI helps with GDPR compliance by utilizing local data processing. Instead of transmitting raw, sensitive data (like facial imagery or medical metrics) to a centralized cloud, the AI inference happens directly on the local hardware. The device instantly converts the raw data into anonymous metadata (such as a simple people count or temperature reading) and immediately discards the original file, ensuring no Personally Identifiable Information (PII) ever leaves the device or is stored long-term.

By doing this, Edge AI inherently satisfies the difficult GDPR mandates of Data Minimization and Purpose Limitation.

Why Cloud AI is Failing the European Privacy Test

For years, the standard AI model involved deploying “dumb” sensors that streamed massive amounts of data to massive cloud servers for processing. In the current regulatory climate, this architecture is failing for several reasons:

- The Transit Vulnerability: Data is most vulnerable when it is moving. Streaming raw video across networks opens up multiple vectors for interception.

- Data Sovereignty and the US CLOUD Act: If your cloud servers are hosted outside the EU, or operated by a US-based company subject to the CLOUD Act, you risk violating GDPR’s strict rules on international data transfers.

- Latency and Bandwidth Costs: Sending heavy data streams (like 4K video for smart city traffic monitoring) to the cloud creates unacceptable latency and astronomical bandwidth costs.

Key Pillars of a GDPR-Compliant Edge AI Architecture

Building a compliant system requires a combination of specialized hardware and decentralized software models.

1. On-Device Inference & Immediate Data Destruction

The cornerstone of privacy-first AI is processing power at the edge. Smart cameras and IoT sensors equipped with dedicated AI chips use computer vision to analyze frames locally. They extract the necessary insight and delete the raw video frame in milliseconds. Because the video is never saved, there is no PII to protect.

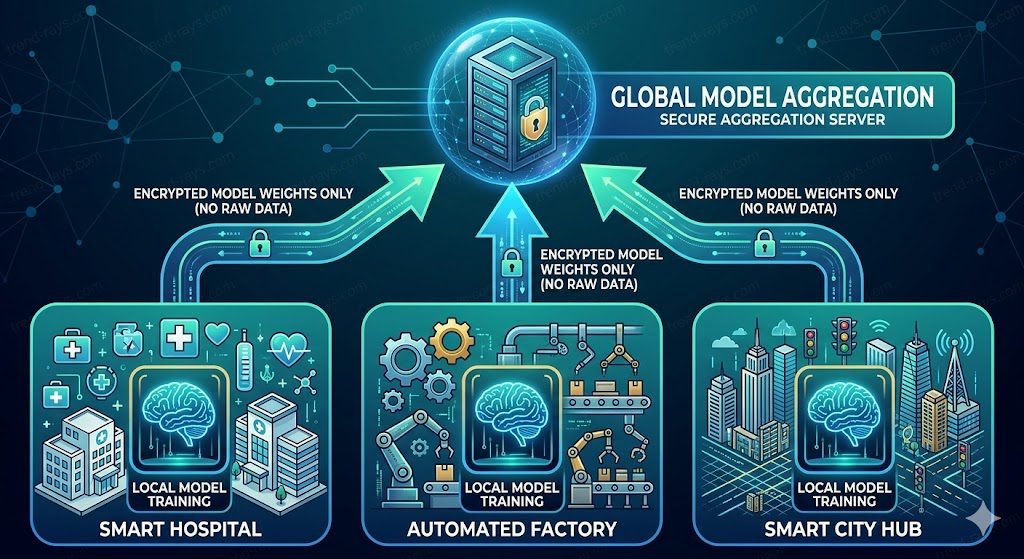

2. Federated Learning (FL)

Federated Learning is the breakthrough technology driving compliant AI in 2026. FL allows multiple edge devices (like hospital monitors across different branches) to collaboratively train a shared AI model without ever exchanging their raw local data. The devices analyze their local data, learn from it, and only share the “model updates” or algorithmic “weights” with a central server.

3. Hardware-Level Security

If data is processed at the edge, the physical box sitting on the factory floor or street pole must be impenetrable. A GDPR-compliant edge architecture requires robust physical security, including Trusted Platform Modules (TPMs) for cryptographic keys, secure boot protocols, and containerized software deployment to prevent malicious tampering.

Does Edge AI store personal data?

No, a properly configured Edge AI system does not store personal data. While it temporarily holds raw data in its volatile memory (RAM) for the milliseconds required to process the AI algorithm, the system is engineered to permanently delete that data immediately after extracting the necessary anonymous insights. Because the raw data is never written to long-term storage, it cannot be breached, subpoenaed, or misused.

Top Use Cases & Solutions for Privacy-First Edge AI in 2026

The shift to the edge is transforming high-risk industries across Europe:

- Smart Cities and Crowd Density Monitoring: Municipalities are using Edge AI solutions to count crowds in train stations, plazas, and festivals. By analyzing “blobs” or human shapes rather than utilizing facial recognition, these systems achieve over 95% accuracy while remaining strictly anonymous and GDPR compliant.

- Healthcare & Remote Patient Monitoring: Wearable medical IoT devices analyze blood oxygen or heart rate anomalies locally. They alert doctors to life-threatening changes without sending raw, continuously identifiable patient histories to third-party servers, ensuring both GDPR and medical device compliance.

- Industrial IoT & Smart Manufacturing: Factories are deploying edge vision AI for defect detection on assembly lines. This keeps proprietary manufacturing processes and employee movements completely isolated from external cloud networks.

The Content Gap: Hardware Alone Doesn’t Guarantee Compliance

A critical warning for business leaders: buying an industrial Edge AI box from a top-tier manufacturer does not automatically make your operations GDPR compliant.

In legal terms, the hardware manufacturer is the “Provider,” but the business using it is the “Deployer.” The Deployer is still legally responsible for managing user consent where applicable, clearly defining the data lifecycle, posting transparent public notices (even for anonymous counting systems), and ensuring the AI models loaded onto the hardware are completely free of algorithmic bias.

Frequently Asked Questions (FAQs)

Does using Edge AI mean my business no longer needs to ask for GDPR consent?

Not necessarily. If your Edge AI system strictly processes and immediately deletes data without ever identifying a unique individual (for example, anonymous crowd counting using thermal sensors), explicit consent might not be required, as you can claim “legitimate interest.” However, GDPR transparency rules still apply—you must display clear physical signage informing the public that anonymized AI processing is taking place on the premises.

Is facial recognition legal in Europe if the AI processing happens entirely on the edge device?

It is still heavily restricted. Even if the biometric data (a facial scan) never leaves the local camera, the act of identifying a specific, unique individual constitutes processing highly sensitive personal data under GDPR Article 9. Regardless of where the processing happens (cloud or edge), you still need explicit, freely given user consent or a strict legal exemption (such as critical national security) to deploy facial recognition in the EU.

Can an Edge AI device be hacked to steal the raw data?

While edge devices drastically reduce attack surfaces by not transmitting raw data over the internet, the physical hardware can still be targeted. This is why GDPR-compliant edge architecture must include hardware-level encryption, such as Trusted Platform Modules (TPMs), and secure boot processes. However, if a properly configured privacy-first edge device is breached, the hacker should only find anonymized metadata (like text-based spreadsheets of temperatures or counts), because the raw video or sensor data is instantly deleted from the volatile memory (RAM) after inference.

Do we still need to conduct a Data Protection Impact Assessment (DPIA) if we switch to Edge AI?

Yes. While Edge AI significantly lowers your compliance risk profile, deploying any new AI system that systematically monitors public spaces, employees, or health data automatically triggers the GDPR requirement for a DPIA. The advantage is that utilizing edge computing and immediate data destruction will serve as your primary risk mitigation strategy within that assessment, making it much easier to pass.

What is the difference between Data Anonymization and Pseudonymization in edge computing?

This is a critical legal distinction. Anonymization permanently destroys any way to identify a person (e.g., an edge camera turns a video feed of a shopper into a simple text data point that says “1 adult entered at 9:00 AM”). Pseudonymization masks the identity but keeps a cryptographic “key” to re-identify them later if needed (e.g., replacing a patient’s name with an ID number). True privacy-first Edge AI relies on strict anonymization, which takes the resulting data completely out of GDPR’s scope.

Conclusion: Your Next Steps to Future-Proof Your AI Infrastructure

The era of sending massive troves of sensitive European data to centralized clouds is coming to an end. Edge AI provides the ultimate bridge between advanced technological capability and absolute legal compliance.

However, reading about privacy-first architecture is only the first step. To genuinely protect your organization from GDPR fines and turn compliance into a competitive advantage, you need to take immediate action. Here is what your IT and legal teams should do this week:

- Audit Your Current IoT Data Flows: Identify exactly what your current sensors and cameras are sending to the cloud. Are you transmitting raw video, or just metadata? If it is raw data, calculate the financial risk of a potential breach versus the cost of upgrading to edge processors.

- Interrogate Your Hardware Vendors: When procuring new smart equipment, do not accept vague “GDPR Compliant” marketing labels. Ask your vendors specific engineering questions: Does this device utilize Federated Learning? How many milliseconds does raw data sit in the RAM before it is overwritten? Does the hardware feature a Trusted Platform Module (TPM)?

- Align IT with Compliance: Do not buy Edge AI hardware in a vacuum. Bring your engineering team and your Data Protection Officer (DPO) into the same room. Ensure your public privacy notices and Data Protection Impact Assessments (DPIAs) reflect the fact that data is now being anonymized and destroyed locally.

By taking these steps, you do more than just keep the regulators at bay—you build a resilient, high-performance infrastructure that your B2B clients and consumers can implicitly trust.