In early 2024, a finance worker at a multinational firm in Hong Kong attended a video call with what appeared to be their CFO and several colleagues. They discussed an urgent, secret transaction. The worker felt something was slightly “off,” but the voices and faces were unmistakable. By the time the call ended, the employee had transferred $25.6 million to scammers.

What was once a tool for harmless celebrity impressions or funny viral clips has officially become a multi-billion-dollar weapon. As we move through 2026, the line between an AI prank and a high-stakes cybercrime has vanished. This guide explores the technology behind the threat and how the next generation of deepfake audio detection SaaS is neutralizing it.

The Difference Between an AI Voice Prank and Vishing

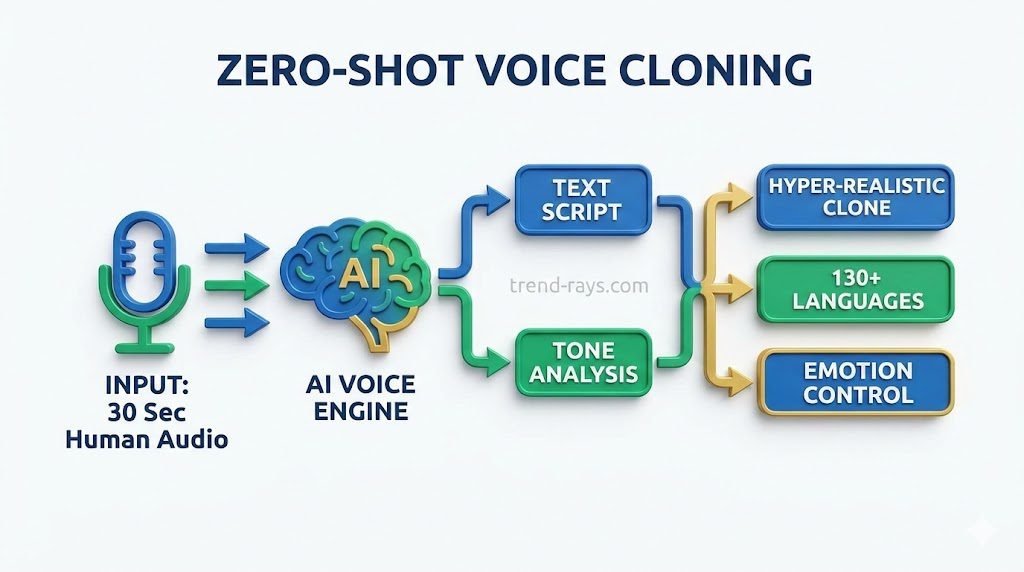

To understand the threat landscape, we must first define how its application shifts from benign to malicious. Text-to-Speech (TTS) engines and zero-shot voice cloning platforms are now highly accessible. Scammers often use high-pressure tactics similar to the scripts found on various prank calling platforms.

- The AI Prank: A user might clone a famous politician’s voice to read a funny script or create a custom voicemail greeting. The intent is entertainment, and the impact is harmless.

- The Vishing (Voice Phishing) Attack: This is the weaponized version. Attackers use these exact same commercial off-the-shelf (COTS) voice engines to execute targeted social engineering. The voice used isn’t a celebrity, but a trusted boss or family member.

This shift from fun to fraud is powered by the same tools hobbyists use for creative projects, often requiring specific Clownfish Voice Changer settings or mobile apps to achieve a realistic output.

The Psychology of the “Perfect Fool”: Why Humans Fail

Why do intelligent, security-conscious people fall for AI audio scams? It’s not a lack of intelligence; it’s a biological “hack” of the human brain.

- The Familiarity Bypass: Research shows that when we hear a voice with a familiar accent or cadence, our brain’s “trust circuits” override our skepticism. We are hardwired to believe that “local” equals “safe.”

- Manufactured Urgency: Scammers clone emotion. By using a panicked tone (“I’ve been arrested,” or “The deal will collapse in 10 minutes”), they trigger the victim’s “fight or flight” response, which physically shuts down the prefrontal cortex—the part of the brain responsible for logical reasoning.

- The Authority Bias: We are socially conditioned to follow instructions from superiors. When the “CEO” calls, an employee’s first instinct is to be helpful, not suspicious.

The Solution: Meet Your “Digital Bodyguard”

Since our human ears are now obsolete for detection, we need a digital layer that doesn’t get “stressed.” Modern Deepfake Audio Detection SaaS acts as a background bodyguard for every call.

How the Tech Actually Works:

- Liveness Detection: Real human speech comes from a physical vocal tract. It has micro-vibrations and “breathiness” that AI—no matter how good—cannot yet replicate. SaaS tools “poke” the audio to see if it has the resonance of a physical body or the “flatness” of a digital speaker.

- Micro-Frequency Analysis: SaaS platforms like Pindrop or Resemble AI analyze frequencies invisible to the human ear. They look for “algorithmic stitching”—the tiny digital seams where the AI combined sounds to form words.

- Real-Time Labeling: For a business, the solution is a subtle HUD (Heads-Up Display) on their VoIP phone or Zoom screen that shows a “Trust Score.” If the score drops, a red banner appears: “Caution: Synthetic Audio Detected.”

The Rise of the “Deepfake CEO Scam” & Enterprise Risk

The “CEO Scam” (Business Email/Voice Compromise) is the most profitable form of cybercrime today. Attackers don’t need to hack a database; they just need to sound exactly like the CFO demanding an immediate vendor payment before the end of the quarter.

Why Traditional Defense Fails

Historically, enterprise security architecture has been built around verifying what you know (passwords) and what you have (tokens). Standard email firewalls and 2FA cannot intercept a live phone call. Furthermore, human ears are notoriously unreliable at detecting high-fidelity synthetic audio in high-stress situations.

The Dual Perspective: Who Benefits?

For modern entrepreneurs, integrating voice security is becoming a mandatory pillar of a robust AI tech stack for small businesses, ensuring that automation doesn’t come at the cost of vulnerability.

The Enterprise (CEO & IT Director’s View)

For a business, this SaaS isn’t just “security”—it’s Operational Integrity.

- The Benefit: It prevents the “Deepfake CEO Scam” from ever reaching the finance team.

- IT Point of View: These tools are frictionless. They integrate via API into existing systems (Teams, Slack, Cisco) and provide “Voice Biometrics” that replace vulnerable security questions (like “What was your first pet?”).

The Individual (The Family Perspective)

For an individual, this is about protecting the most vulnerable people we love.

- The Benefit: It stops the “Grandparent Scam” (cloning a child’s voice to ask for bail money).

- The Individual Angle: Consumer apps now offer “Identity Shielding” that alerts you if a caller’s voice signature doesn’t match the person they claim to be.

The Economics: What Does Peace of Mind Cost?

The pricing for this SaaS is finally becoming scalable. It’s a “pennies to protect thousands” equation.

| Plan Type | Who it’s For | Estimated Pricing (2026) |

| Consumer/Family | Individuals & Small Families | $7 – $12 / month |

| Business SaaS | SMEs & Corporate Offices | $25 – $60 / user / month |

| Enterprise API | Banks & Global Call Centers | $0.10 – $0.40 per minute analyzed |

The Bottom Line: Paying $50 a month for an IT team’s protection is objectively cheaper than losing a $250,000 wire transfer.

How Cybersecurity SaaS is Neutralizing the Threat

The solution lies in dedicated AI voice scam protection that removes the burden of detection from human employees.

- Liveness Detection for Audio: Modern SaaS platforms analyze the incoming stream to confirm it originates from a physical human vocal tract. It flags the “flat” resonance of a digital speaker.

- Micro-frequency & Phonetic Analysis: Detection software scans for acoustic artifacts, unnatural breathing patterns, and digital “stitching” that are imperceptible to the human ear.

- Cryptographic Watermarking: Leading AI voice vendors are now embedding digital signatures into their generated audio, allowing SaaS tools to instantly recognize synthetic origins.

The Business vs. Individual Perspective

For the IT Director & CEO (The Business View)

- Risk Mitigation: Preventing a single $200k fraud pays for the software for a decade.

- Frictionless Security: Real-time voice authentication reduces “Average Handle Time” in call centers because the voice is the password.

- Operational Integrity: It ensures that sensitive data stays within authenticated circles.

For the Individual (The Personal View)

- The “Grandparent Scam” Defense: Individuals can now use consumer-grade apps that flag “Likely AI” on incoming calls.

- Emotional Security: Knowing that a frantic call from a “family member” can be verified prevents heart-wrenching emotional and financial trauma.

The Economics of Defense: Pricing Models

| Model | Target Audience | Estimated Cost (2026) |

| Pay-Per-Minute | Startups / Pilots | $0.05 – $0.50 / minute |

| Per-Seat (Subscription) | Corporate Offices | $15 – $50 / user / month |

| Enterprise API | Banks / Call Centers | Custom (High Volume) |

| Consumer Apps | Individuals | Free (Basic) or $4.99/mo |

Best Practices & Real-World Examples

Beyond software, human protocols are the ultimate firewall.

- Establish a “Safe Word”: Companies and families should have a non-guessable word (e.g., “Neon-Pineapple”). If a caller asks for money or secrets, they must provide the word.

- The “Call Back” Rule: If you get an urgent request, hang up and call the person back on their saved direct line. Scammers cannot intercept an outbound call to a known number.

- Watch for “Perfect” Speech: Real people say “um” and “uh” naturally. If the voice is too perfectly aligned or lacks natural background noise, be suspicious.

The Survival Kit: 3 Hacks No AI Can Beat

Even with the best SaaS, human common sense is your last line of defense.

- The “Safe Word”: Establish a family or company safe word (e.g., “Neon-Pineapple”). If a “CEO” or a “son” calls with an emergency, they must provide the word. If they can’t, hang up.

- The “Call-Back” Protocol: If you get an urgent, high-pressure request, say: “I’ll call you back in 60 seconds.” Then, call them back on their saved, verified number. Scammers can spoof an incoming call, but they cannot hijack your outbound call.

- The “Personal Question”: Don’t ask something Google knows. Ask something only you know. “What was the name of that terrible waiter we had in Goa last year?”

While detection software is the frontline defense, true digital sovereignty requires a combination of smart protocols and the use of secure anonymous chat apps to keep your metadata and identity out of the hands of bad actors.

Frequently Asked Questions (FAQs)

Q: Can AI voice cloning bypass my bank’s voice recognition security?

A: Unfortunately, yes. Standard voice biometrics used by many financial institutions often look for “voice prints” that high-fidelity AI clones can now replicate with over 95% accuracy. This is why many banks are now upgrading to enterprise voice cloning security that includes “liveness detection” to distinguish between a digital broadcast and a live human speaker.

Q: How many seconds of audio does a scammer need to clone my voice?

A: In 2026, technology has reached the “indistinguishable threshold.” Modern zero-shot cloning tools only need 3 to 5 seconds of clear audio—often scraped from a LinkedIn video or an Instagram Reel—to create a convincing synthetic replica.

Q: What are the “digital artifacts” I should listen for in a suspected deepfake call?

A: While AI is getting better, look for synthetic audio artifacts such as:

- Unnatural Breathing: AI often forgets to “breathe” or places breaths in the middle of words.

- Metallic Tones: A subtle “robotic” or electronic hum during complex vowel sounds.

- Lack of Background Consistency: If the caller claims to be at an airport but the background is perfectly silent or has a looping “office” sound, it’s a major red flag.

Q: Is there a free app to detect if a call is an AI scam?

A: While professional deepfake audio detection SaaS is usually subscription-based for businesses, individual users can use apps that offer “AI Call Shielding.” These tools analyze incoming audio frequencies in real-time and provide a “Risk Score” on your caller ID.

Q: What is the “Safe Word” protocol, and does it actually work?

A: It is one of the only “AI-proof” defenses. By establishing a non-guessable family or company safe word, you create a manual verification layer that the AI cannot scrape from the internet. If a caller cannot provide the word during an “emergency,” the call is a confirmed fraud.

Conclusion

The democratization of AI means anyone with 3 seconds of your audio can impersonate you. While the “Audio Prank” era was amusing, the “Audio Scam” era requires a new level of vigilance. By combining real-time voice authentication software with updated human protocols, we can reclaim the security of our most basic form of communication: our voice.

We are in a race between Scams and SaaS. The scammers have the head start, but the technology to fight back is finally here. By combining Real-Time Voice Authentication with simple human protocols, we can keep the “pranks” funny and the “scams” at the door.