If you live in Australia, you likely remember the notorious “Hi Mum” WhatsApp text scams that swept the country in 2022. Fast forward to 2026, and the cybercriminal playbook has received a terrifying, high-tech upgrade.

Instead of a generic text message from an unknown number, Australians are now receiving frantic phone calls that sound exactly like their children, their boss, or even the Premier of their state. By weaponizing artificial intelligence, scammers are bypassing traditional skepticism and directly targeting Australian bank accounts and crypto wallets.

Here is exactly how AI voice cloning is infiltrating Australia, why traditional defences are failing, and the new cybersecurity technology fighting back to protect your digital identity.

While these tools can be weaponized for fraud, the underlying technology is primarily designed for creative efficiency, leading to a massive AI voiceover disruption in film, gaming, and global localization.

What is an AI Voice Scam (Vishing)?

An AI voice scam, or “vishing” (voice phishing), is a cyber-attack where criminals use artificial intelligence software to clone a real person’s voice. By feeding just 3 to 5 seconds of social media audio into a text-to-speech engine, scammers can generate hyper-realistic audio to impersonate loved ones, bank officials, or executives over the phone.

What used to be a harmless joke using basic free prank call websites has rapidly evolved into a highly sophisticated, AI-driven cyber-attack capable of draining bank accounts.

How Scammers are Targeting Australians

The technology is borderless, but the tactics used against Australians are highly localized. According to recent data from Swinburne University of Technology, AI voice cloning scams cost Australians $25.8 million in just the first half of 2025 alone. Scammers are exploiting our trust in specific national institutions, family bonds, and local leaders.

Case Study: The Fake Premier Investment Scam

To understand how convincing this technology is, look at the recent case involving Sydney-based advertising executive Dee Madigan.

Madigan received a message from what appeared to be the social media account of Queensland Premier Steven Miles, pitching a “fantastic investment idea.” Because she knew the Premier personally, she immediately suspected it was a text-based scam. To test the scammer and shut the interaction down, she asked them to call her.

Instead of backing down, the scammer called. “All of a sudden, my phone rang and on the other end was his voice,” Madigan reported. The scammers had used publicly available audio of the Queensland Premier to clone his voice in real-time. Madigan noted it was “surprisingly good” and “definitely sounded like Steven.”

If a highly aware advertising executive can be momentarily stunned by an AI clone of a State Premier, imagine how effective this tactic is against an unsuspecting retiree.

Other Common Australian Attack Vectors:

The ATO & Bank Impersonation: You receive a call from what sounds exactly like a local CommBank representative or an Australian Taxation Office (ATO) official. The caller ID is spoofed to look legitimate, and the voice uses a perfect Australian accent to warn you of a “compromised account” or an “unpaid tax warrant.”

“Hi Mum” 2.0 (The Family Emergency): Criminals scrape a few seconds of audio from a teenager’s public TikTok or Instagram reel. They then call the parents, using the cloned voice to claim they’ve been in a car accident or lost their phone and urgently need funds transferred via PayID.

The Fake Investment Scheme: High-profile Australians are actively being cloned. Recently, the voices of state premiers and local celebrities have been deepfaked to endorse fraudulent cryptocurrency trading platforms, lending a false sense of local authority to global scams.

The Final Target: Why Scammers Want Your Voice

While the cloned voice is the weapon, the ultimate goal isn’t just to trick you out of a quick bank transfer. Scammers use the cloned voice to induce a state of absolute panic, pushing you to hand over the keys to your entire financial life.

Their primary targets over the phone are One-Time Passwords (OTPs), PayID details, and Cryptocurrency Seed Phrases. Once a scammer uses a familiar voice to convince you to read an OTP out loud, they can bypass your two-factor authentication (2FA) and drain your accounts in seconds.

How Scammers Use Your Voice: The “Voiceprint” Bypass

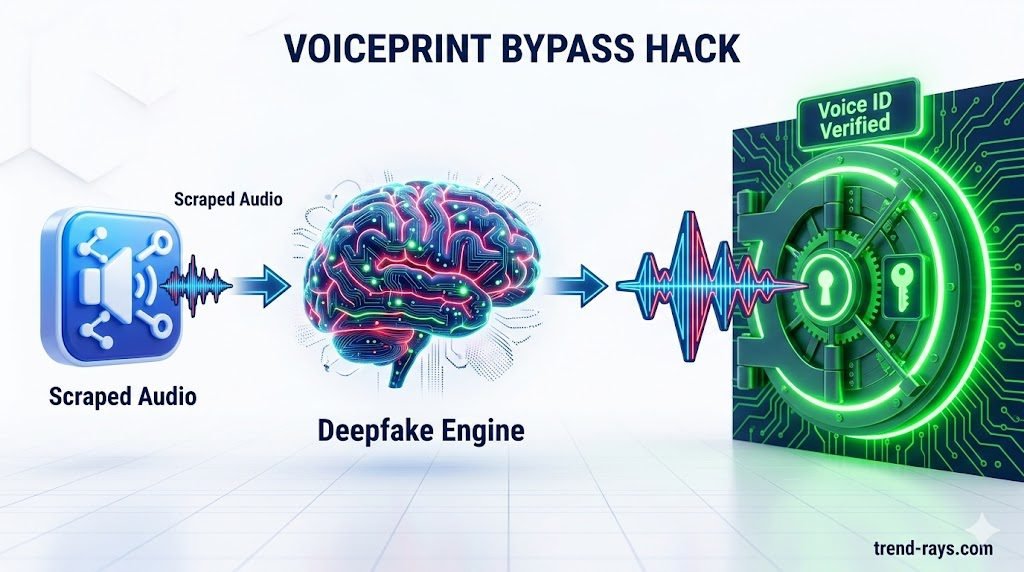

While creating panic to steal an OTP is the most common tactic, sophisticated scammers have a second, far more technical use for your cloned voice: Bypassing Government and Bank Biometrics. While hobbyists might use simple mobile apps or tweak their Clownfish voice changer settings for gaming and content creation, scammers utilize advanced zero-shot cloning engines to replicate your exact vocal biometric.

Millions of Australians use a “Voiceprint” to verify their identity over the phone with Centrelink, the ATO, and major banks (like Bank Australia). These institutions claim that your voice is as unique as a fingerprint. However, in 2026, AI has broken this security model.

Here is exactly how the scam works:

- The Scraping: The scammer pulls 30 seconds of your voice from a public podcast, a corporate video, or your social media.

- The Data Breach: The scammer acquires your Customer Reference Number (CRN) or account number from a previous data leak (such as the Optus or Medibank breaches).

- The Automated Hack: The scammer dials the ATO or bank automated self-service line. When the automated prompt asks, “Please say your voicephrase to verify your identity,” the scammer simply plays your cloned voice saying the required phrase (e.g., “In Australia, my voice identifies me”).

- The Breach: The legacy voice recognition software fails to detect the AI. It unlocks the account, allowing the scammer to change routing numbers, check balances, or initiate transfers without ever speaking to a human.

Why Traditional Defenses Fail (And Why We Fall For It)

Australian studies show that even highly tech-savvy individuals only have a coin-flip chance of detecting a high-fidelity AI voice. Why? Because the scam relies on a biological exploit known as the “Amygdala Hack.”

Scammers don’t just clone the sound of a voice; they clone stress and urgency. When you hear a loved one crying or an “ATO official” threatening arrest, your brain’s “fight or flight” response is triggered. This physical reaction shuts down the prefrontal cortex—the logical part of your brain that would normally notice digital glitches or ask critical questions. You stop analyzing and start complying.

The Solution: How Businesses Are Actually Fighting Back

Telling Australians to simply “listen closely” is terrible advice. The defense has to shift from human ears to sophisticated software. Across Australia, enterprise call centers are quietly deploying Deepfake Audio Detection SaaS. Here is how the solution works in practice:

1. API Integration in Call Centers: Businesses don’t ask their staff to run software manually. Instead, they integrate SaaS tools (like Pindrop or Nuance’s latest anti-spoofing updates) directly into their VoIP phone systems via API. When a customer calls a bank, the software analyzes the audio stream in the background before the human agent even picks up.

For Australian SMEs and local contractors, integrating these protective SaaS tools is quickly becoming a non-negotiable part of building the ultimate AI tech stack for small business, ensuring that customer automation doesn’t become a security liability.

2. Real-Time Risk Scoring (Liveness Detection): As the caller speaks, the SaaS tool looks for the natural “acoustic resonance” of a physical human chest and vocal cords. AI voices lack these physical micro-vibrations. The software generates a “Trust Score” on the call center agent’s screen. If the score drops below 90%, the agent’s screen flashes red, instructing them to trigger a manual security protocol (like sending an SMS code to the registered device).

3. Moving to “Zero-Trust” Authentication: The biggest solution businesses are adopting is accepting that voice is no longer a password. Forward-thinking Australian banks are actively deprecating “Voice ID” and moving back to strict Multi-Factor Authentication (MFA) via authenticator apps or secure hardware tokens.

4. Micro-Frequency Analysis: Deepfake engines leave behind digital artifacts—tiny algorithmic “stitches” and unnatural breathing patterns that human ears cannot hear. Detection software scans for these anomalies in milliseconds, blocking the call or alerting the human operator that the voice is spoofed.

The Australian Survival Kit: Detailed Steps to Protect Yourself

While enterprise SaaS protects the corporate networks, you must harden your personal protocols. Here is your actionable, step-by-step survival kit:

1. Opt-Out of Voice Biometrics Today: Call your bank, the ATO, and Centrelink immediately. Request that they remove your Voiceprint from their database and disable voice authentication for your accounts. Ask them to mandate PINs, passwords, or SMS verification for all future phone interactions.

2. Establish a Family “Safe Word”: This is the ultimate low-tech defense. Sit down with your parents and children and agree on a random, non-guessable word (e.g., “Neon-Kangaroo”).

- The Rule: If anyone calls claiming to be in an emergency, needing bail money, or asking for a PayID transfer, the listener must ask: “What is the safe word?” If the caller hesitates or fails, hang up.

3. Master the “Call-Back” Protocol: Scammers use software to “spoof” caller ID, making their call look like it is coming from the official CommBank or ATO number.

- The Rule: If you receive an urgent call from an authority figure demanding money or passwords, say: “I understand this is urgent, but for my security, I am hanging up and calling you right back on the official number.” * Scammers cannot intercept an outbound call you initiate. If it was a scam, the real bank representative you call back will have no record of the emergency.

Report to Scamwatch: If you encounter an AI voice clone, report it immediately to the National Anti-Scam Centre via Scamwatch.gov.au. Reporting helps authorities track the software being used and allows banks to issue targeted warnings to the public.

People Also Ask (FAQs)

What is the difference between smishing and vishing?

Smishing (SMS phishing) uses deceptive text messages with malicious links to steal information. Vishing (voice phishing) uses phone calls, increasingly powered by AI voice cloning, to socially engineer victims into handing over passwords or money.

Can AI voice scams bypass bank security?

Yes. If an Australian bank relies solely on traditional voice recognition biometrics, a high-quality AI clone can often bypass it. This is why leading financial institutions are upgrading to detection software that analyzes liveness and acoustic resonance rather than just a voiceprint.

How do I report an AI scam in Australia?

You should immediately report AI voice scams to the National Anti-Scam Centre through the Scamwatch website. If you have provided banking details or an OTP, contact your bank’s fraud department immediately using the phone number on the back of your card.

Despite the risks of deepfakes, voice recognition technology remains incredibly powerful when properly secured, particularly in healthcare where enterprise-grade AI dictation services are safely reducing administrative burnout.