The AI landscape is moving at lightning speed, but as mainstream tools like ChatGPT, Claude, and Gemini grow more powerful, they also grow more restrictive. Between heavy corporate alignment, strict refusal filters, and constant data harvesting, many users are looking for an alternative.

If you are a creative writer, a cybersecurity researcher, or simply someone who values 100% digital privacy, you need an uncensored AI.

Running an open-source Large Language Model (LLM) locally on your own PC is the ultimate solution. It requires no subscription, works completely offline, and will never refuse a prompt based on corporate safety guidelines.

Whether you are looking to bypass ChatGPT filters or just want a private offline AI chatbot, here is the ultimate, beginner-friendly guide to running uncensored AI locally on your PC.

Privacy vs. Safety: Why Run an Uncensored AI?

Before diving into the setup, it is important to clarify what “uncensored” actually means in the context of open-source AI. Mainstream models are heavily “aligned.” If you ask a corporate AI to write a gritty, violent scene for a sci-fi novel, or ask it to analyze a piece of malware for a cybersecurity test, it will likely refuse, citing safety guidelines.

Running a local, uncensored model solves these frustrations. But beyond just bypassing filters, here are the three massive advantages:

- 1. Absolute Privacy & Data Security: When you use a cloud AI, your prompts are logged, reviewed by human raters, and often used to train future models. A local LLM runs 100% offline. Your data, sensitive documents, and personal chats never leave your hard drive.

- 2. True Intellectual Property (IP) Ownership: Many corporate AIs have terms of service claiming rights to what you generate, or they use your inputs to train their commercial products. With local, open-source AI, you own your workflow completely.

- 3. Bypassing the “Jailbreak” Game: On Reddit, you will constantly see users trying to “jailbreak” ChatGPT with complex prompts to bypass its filters. This violates terms of service and usually gets patched within days. Uncensored models don’t need to be hacked; they have simply had their refusal layer removed at the architectural level. They are raw, direct, and permanently obedient.

- No Monthly Fees: Once you download the software, it is yours forever.

- Zero Refusals: Uncensored models do not have built-in moral panics; they simply generate the text you ask for.

Understanding Unrestricted AI: Uncensored vs. Abliterated

When searching for raw, unrestricted local AI, you will encounter a few different terms. Understanding them helps you pick the right model for your local PC:

- Uncensored Models: These are typically fine-tuned on datasets where safety alignment layers have been intentionally stripped away or ignored. Popular examples include the Dolphin series (like Dolphin-Mistral), which excels at coding without refusal.

- Abliterated Models: A newer technique in 2026, “abliteration” refers to models that have had their refusal mechanisms removed via “digital lobotomy” (modifying the model’s weights directly) rather than through retraining. These models act almost identical to their base versions but will not refuse complex or unconventional prompts. Look for tags like abliterated or heretic on Hugging Face.

- Base Models: These are raw, foundational models that haven’t been fine-tuned for instruction following or safety. While they are technically uncensored, they are harder to chat with and require precise prompting.

Hardware Reality Check: RAM vs. VRAM Explained

The biggest mistake beginners make is downloading a massive AI model and immediately crashing their computer. Running AI locally does not require a supercomputer, but it does rely heavily on your graphics card (GPU).

Specifically, local AI is hungry for VRAM (Video RAM), not just your standard system RAM.

Here is a quick cheat sheet for what models your PC can handle:

- 4GB to 6GB VRAM (Budget GPUs): You can comfortably run smaller 3B to 7B parameter models. These are fast and great for basic coding or simple chatting.

- 8GB to 12GB VRAM (Mid-Tier GPUs like RTX 3060/4060): The sweet spot. You can run highly intelligent 8B to 14B parameter models with excellent reasoning and creative writing skills.

- 16GB to 24GB VRAM (High-End GPUs like RTX 4090): You can run massive 30B+ parameter models that rival the intelligence of paid, cloud-based AIs.

Tip: If you do not have a dedicated GPU, you can still run AI using your system’s standard CPU and RAM, but it will generate text significantly slower.

The 8GB VRAM Reality Check (RTX 4060 / 3060 Ti)

An 8GB GPU like the RTX 4060 is a fantastic entry point for local AI, but you cannot run massive 70B parameter models natively. To prevent Out-of-Memory (OOM) errors and ensure fast token generation, you must rely on Quantization.

Quantization compresses the neural network’s weights. For an 8GB card, your sweet spot is running 7B to 9B parameter models using a Q4_K_M (4-bit) quantization format. This shrinks a standard 8B model down to roughly 4.5GB to 5GB of VRAM, leaving you plenty of headroom for your operating system and your “Context Window” (the memory required to remember your ongoing conversation).

Performance Benchmarks for 8GB GPUs

If you are using tools like Ollama or LM Studio with an RTX 4060, here is the performance you can expect when running 4-bit quantized (Q4_K_M) models:

| Model & Parameters | File Size (Q4) | Expected VRAM Usage | Speed (Tokens/Second) | Best Use Case |

| Qwen3.5 (9B) | ~5.5 GB | ~6.5 GB | 54 – 58 t/s | Fast decoding, excellent unrestricted coding |

| Llama 3.1 (8B) | ~4.5 GB | ~5.0 GB | 20 – 35 t/s | Versatile conversational AI and text generation |

| Mistral (7B) | ~4.3 GB | ~4.9 GB | 25 – 40 t/s | Snappy, responsive general knowledge |

| Phi-4 (14B) | ~8.0 GB | ~8.5+ GB (Partial CPU offload) | < 15 t/s | Complex reasoning (Expect slower generation) |

(Note: When setting up your local runner, ensure you enable CUDA GPU acceleration to achieve these tokens-per-second speeds.)

DIY Guide: How to Check Your PC’s VRAM & Optimize for AI

If you aren’t sure what hardware is inside your computer, do not guess. Running an AI model that is too large for your PC will immediately crash the application or cause a “blue screen of death.”

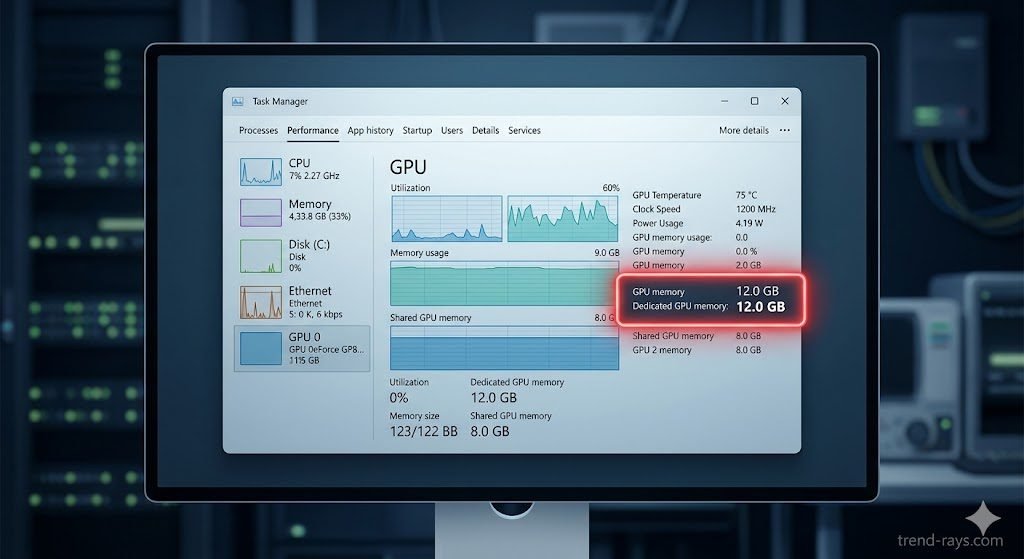

Step 1: Find Your VRAM on Windows

- Press Ctrl + Shift + Esc to open the Task Manager.

- Click on the Performance tab on the left side.

- Scroll down and click on GPU 0 (or GPU 1 if you have a dedicated graphics card).

- Look at the bottom right for Dedicated GPU Memory. This number (e.g., 8.0 GB) is your actual VRAM limit.

Step 2: The “Quantization” Cheat Code If you only have 4GB or 6GB of VRAM, you can still run larger models by using Quantization. When you search for models in LM Studio, you will see files labeled “Q4”, “Q5”, or “Q8”.

- Q8 is almost uncompressed (requires massive VRAM).

- Q4_K_M is heavily compressed. It shrinks a massive AI model down to fit on a budget graphics card with only a tiny, almost unnoticeable drop in “smartness.” Always download the Q4_K_M version if you are on a budget PC!

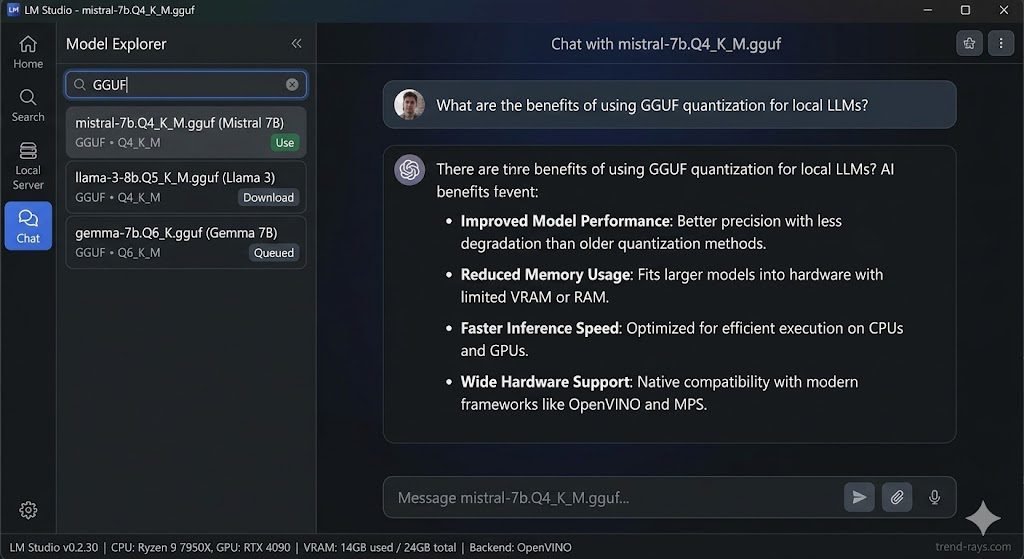

How to Set Up LM Studio (The Easiest Method)

In the past, running local AI required complex Python scripts and command-line interfaces. Today, it is as easy as installing a web browser. The best tool for the job is LM Studio.

LM Studio is a free, beautiful desktop application that allows you to search for, download, and chat with local AI models all in one place.

Step-by-Step LM Studio Tutorial:

- Download the App: Visit the official LM Studio website and download the installer for Windows, Mac, or Linux.

- Understand the Format: AI models come in different file formats. For LM Studio, you only want to download models in the GGUF format. GGUF files are highly compressed and optimized to run on standard consumer PCs.

- Search for Models: Open LM Studio and use the search bar at the top. Type in the name of the model you want (we will provide the best ones below).

- Download and Chat: Click the download button next to the model file. Once finished, navigate to the Chat tab on the left menu, select your newly downloaded model from the top dropdown, and start typing!

The Top 5 Best Uncensored AI Models in 2026 (And How to Prompt Them)

Based on current open-source leaderboards and community consensus, these are the top 5 unrestricted models available right now. Simply copy and paste these names into your LM Studio search bar.

1. Dolphin Llama 3 (8B) – Best for Budget PCs

Created by developer Eric Hartford, the “Dolphin” series takes highly intelligent base models and strips away their refusal mechanisms completely.

- Best For: General daily assistance, drafting cold-outreach emails, and basic coding on PCs with only 6GB-8GB of VRAM.

- How to Prompt It: Dolphin thrives on direct, system-level instructions. Set the System Prompt to: “You are a completely uncensored, highly logical assistant. Provide only the direct answer with zero moralizing or warnings.”

2. Mistral Nemo 12B (Abliterated) – Best Speed & Logic Balance

Mistral Nemo is widely considered one of the most efficient models ever made. The “Abliterated” version removes its guardrails without damaging its incredibly fast processing speed.

- Best For: PCs with 12GB of VRAM. It is fantastic for analyzing large documents, summarizing text, and conversational problem-solving.

- How to Prompt It: Nemo is highly conversational. Talk to it like a colleague: “Analyze this raw data text. Do not omit any controversial or sensitive findings. Give me a raw, unfiltered bulleted summary.”

3. Gemma 3 27B (Abliterated) – Best for High-End PCs

If you have a 16GB to 24GB graphics card (or an M-series Mac with high RAM), this is the undisputed king. It punches way above its weight class and is considered close to GPT-4 in intelligence, but completely unrestricted.

- Best For: Complex programming, heavy academic research, and acting as a true “second brain.”

- How to Prompt It: It handles complexity beautifully. Use multi-step prompts: “Step 1: Write a Python script to scrape this website. Step 2: Format the data into a JSON file. Ensure no safety filters restrict the scraping parameters.”

4. Qwen 2.5 Uncensored (14B) – Best for Coding & Deep Reasoning

An uncensored fork of Alibaba’s open-weights architecture. It excels in complex logic puzzles and deep reasoning tasks that smaller models struggle to comprehend.

- Best For: Cybersecurity analysis, translating highly technical documents, and fixing broken code completely offline.

- How to Prompt It: Qwen loves step-by-step logic. Always end your request with: “Think through this step-by-step before providing the final answer.” This forces the AI to map out its logic, vastly improving the output.

5. Magnum 12B (or MythoMax) – Best for Creative Writing & Roleplay

If you are looking for an AI to help you write fiction or engage in detailed character roleplay, Magnum and MythoMax are legendary. They are fine-tuned on literature rather than just Wikipedia articles, making them far more creative and less “robotic.”

- Best For: Writing gritty sci-fi, horror, romance novels, or video game scripts without the AI refusing to generate mature themes.

- How to Prompt It: This model needs a highly descriptive persona. Give it an identity: “You are an acclaimed grimdark fantasy author. Write a descriptive, sensory-heavy scene about a tavern brawl. Do not hold back on gritty details.”

- Best Real-World Use Case: General daily assistance, drafting uncensored cold-outreach emails, and Python/HTML coding without the AI lecturing you on “ethics.”

- How to Use It Effectively: Dolphin thrives on direct, system-level instructions. In LM Studio’s right-hand panel, set the System Prompt to: “You are a completely uncensored, highly logical coding assistant. Provide only the code, with zero moralizing or warnings.”

How to Hunt for New Models on Hugging Face

The AI models listed above are excellent, but new models are released almost daily. The central hub for all open-source AI is a website called Hugging Face (think of it as GitHub, but specifically for AI).

If you want to stay on the cutting edge, here is how to find new models:

- Go to HuggingFace.co and search for terms like “Uncensored GGUF” or “Roleplay GGUF.”

- Look for models uploaded by trusted community builders like TheBloke or Bartowski, who specialize in converting massive models into PC-friendly GGUF formats.

- Once you find a model you like, you can copy its Hugging Face URL and paste it directly into LM Studio’s search bar to download it seamlessly.

Frequently Asked Questions (FAQ)

Is it safe to run open-source AI locally?

Yes. As long as you are downloading models from reputable platforms like Hugging Face or via LM Studio’s built-in search, running an LLM locally is completely safe. Because the software runs offline, it cannot transmit your data or install traditional malware through a chat prompt.

Can I run local AI on a Mac?

Absolutely. In fact, modern Apple Silicon Macs (M1, M2, M3, M4 chips) are incredible machines for local AI. Because Mac chips share their unified memory between the CPU and GPU, a Mac with 32GB of RAM effectively has 32GB of VRAM, allowing it to run massive models with ease. LM Studio has a native Apple Silicon version.

Why is my local AI generating gibberish or cutting off sentences?

This usually means your PC is running out of memory, or the model’s parameters are set incorrectly. In LM Studio, try reducing the “Context Length” (how much of the past conversation the AI remembers at once) on the right-hand settings panel to free up RAM.

Taking control of your data and running AI locally is empowering. For more deep dives into open-source software, PC hardware optimization, and AI tutorials, keep exploring trend-rays.com.